Photogrammetry for games

Over the last decade, character models and environments in CGI and video games have come on leaps and bounds, not just due to hardware upgrades, but the adoption of digitization technology like photogrammetry, which turns photos and videos into beautiful 3D models. In the following article, we lift the lid on the topic, covering everything from workflow steps to the prospect of using 3D scanning as an alternative.

Introduction

A video game being played on a smartphone

Before we get into applications, you may well be asking: What is photogrammetry?

In short, the process involves taking overlapping images of a target object from several angles. By identifying the same points in these photographs and ensuring consistent camera positioning, focal length, and distortion, you can get their coordinates, triangulate where they lie in a 3D space, and replicate them as 3D models.

In the world of video gaming, photogrammetry is increasingly being used to capture and add real-life props, locations, and even actors’ faces into games. With the technology, developers are now not only able to build worlds with photorealistic graphics, they can do so at a fraction of the time it would otherwise take to create them manually.

Before turning to photogrammetry, graphics artists often had to start from scratch on such 3D assets, in a workflow that caused them to compromise, balancing aesthetics against gameplay and lead-time restrictions.

Traditional ‘white box’ design methods, in which coding needed to be continually tested as a game evolved around it, also created doubt as systems couldn’t be fully assessed until the project started to come together. By contrast, using photogrammetry, studios can now capture and upload models to create world ‘kits’ ahead of time. This helps developers to be better prepared and deliver on schedule.

Over the remainder of this article, we will break down the individual workflow steps some developers are undertaking to make this possible. We’ll also delve into the different technologies it’s possible to deploy as a means of carrying these out, and assess the potential advantages of each approach.

How is photogrammetry used in video games?

With high graphical fidelity and short launch cycles being key to success in the modern gaming industry, the benefits of adopting photogrammetry there are clear. As such, the technology is quickly becoming industry standard. But how does the whole process work in practice?

As with any other photogrammetry application, the workflow starts with an object being captured from various angles in a linear, matrix-like pattern, with each photograph completely overlapping the next. When capturing larger objects, developers can choose to start by taking 360o wide shots, before narrowing their field of view to get up close and capture fine details. But it’s generally easier to use long-range devices as they’re better suited to this kind of application.

Photogrammetry being used in the video game industry. Image source: smns-games.com

That said, achieving all this is easier said than done, and game studios have to avoid a number of pitfalls in order to ensure they get complete models, with the right shape and proportions. For a start, developers need to consider the equipment they plan to use. If utilizing a high-resolution camera, they will have to make sure that photos overlap.

When using a Light Detection and Ranging (LiDAR) device, they’ll also have to consider what an object’s made of. If this happens to be retro-reflective material, for example, its surface is likely to bounce light back to the scanner’s receiver with minimal signal loss. On the flipside, if said object is shiny or opaque, it’s going to be trickier to digitize.

Key point

Having long been used in surveying to build topographic maps, photogrammetry is now proving a useful tool for video game developers creating 3D environments.

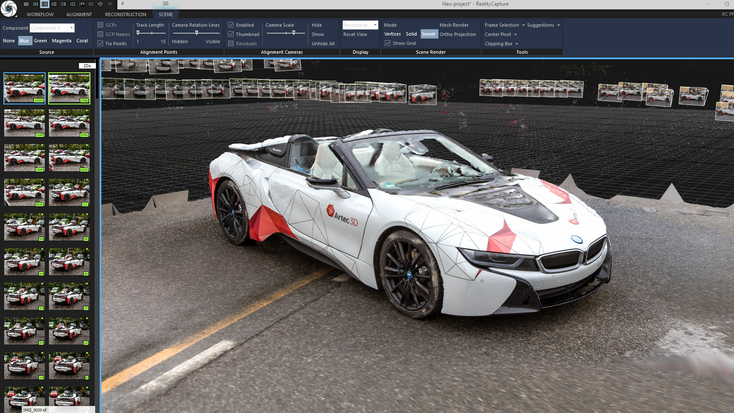

Once developers have tackled these issues and captured a set of completely overlapping measurements, they then need to process them, beginning with a program like Adobe Photoshop. Doing so allows aspects such as lighting and shadows to be tweaked so that end models match the tone of the game they’re designed to furnish. After color correction, these are often exported to programs like RealityCapture, where images can be aligned into a point cloud, and transformed into a textured 3D mesh.

Initial models can later be ‘retopologized,’ a process that sees the polygon counts of models reduced so they’re easier to render in-game. Finally, once the information stored in these 3D meshes is baked into texture files, their surfaces can be tweaked in a number of ways to make them appear more realistic.

You can combine them with ‘masks’ that add visual effects such as shadows and wear, and even use procedure or texture maps to better simulate object geometries, before sending them into popular gaming engines such as Unreal Engine.

Enhance your workflow with AI Photogrammetry

New to Artec Studio, AI Photogrammetry takes many of the same workflow steps and streamlines the entire process. Upload any photoset or video, and Artec Studio will take care of the rest. This begins with sparse reconstruction, where the software creates an initial model for users to tinker with. From there, it’s simply a case of adjusting the model boundary box, and hitting the dense reconstruction button.

This generates a realistic 3D mesh in real time, so you can see it ‘grow’ before your eyes. Of course, the resulting mesh will still need to go through all the same texturing and other post-processing steps as those created with regular photogrammetry. But AI Photogrammetry does offer some unique benefits: it’s open to image data captured by virtually any device (even with your smartphone), and delivers incredible realism as well as relatively high accuracy – lending it further applications outside of video gaming.

Another of the platform’s strengths is its hardware versatility. Artec Studio is compatible with LiDAR, laser, and structured-light 3D scan data, so the potential is there for combined datasets that deliver unprecedented detail.

Examples of photogrammetry in video games

Photogrammetry itself is an evolving but not entirely new technology. The process has long been deployed in areas such as quality control and surveying, but it has only gained traction in gaming fairly recently. One of the first major studios to truly embrace photogrammetry was DICE, a Swedish developer charged with rebooting the hugely popular Star Wars: Battlefront franchise in 2015.

Facing a tight launch schedule, a demand for 60 FPS gameplay, and the need to get to grips with a new console generation, DICE was not short of challenges heading into production. However, the studio still wanted to create a world that was faithful to its source material. To achieve this, the developer delved into the archives to fish out props from the original Star Wars movies, before 3D modeling them with photogrammetry.

DICE Star Wars Battlefront video game characters. Image source: battlefront.fandom.com

Using this approach, DICE was able to more than half the time it took to digitize each asset when compared to its previous title, Battlefield 4. What’s more, the studio’s work allowed it to develop ‘level construction kits’ made up of Star Wars props, character models, and scenery, which formed the basis of the final game. DICE also found that its approach enabled it to establish a workflow well ahead of launch, something that enabled it to get ahead of internal deadlines.

Key point

Photogrammetry is not just used to build sweeping 3D environments, but many of their component parts, including props, foliage, and character models.

Since the developer’s pioneering work first came to light, other blockbuster video games have been built in a similar way. For its own relaunch of the Call of Duty Modern Warfare franchise in 2019, Infinity Ward discovered that it could use data gathered via photogrammetry to carry out a ‘tiling’ process that improved surface detail resolution.

As the development of the title continued, the studio went on to experiment further. In some cases, team members were scanned as a basis for corpse models, and they even used drone-flown scanners to capture larger environments. With photogrammetry ultimately enabling the rapid creation of prop, scenery, gun, vehicle, and character models, the project showed just how far the technology’s video game applications have come.

Photogrammetry software for CGI & video games

Earlier in this article we mentioned RealityCapture, but it’s not the only photogrammetry software that enables you to create 3D models for video games with a high level of accuracy. Industry-leading game developer Electronic Arts (EA), for instance, is known to use PhotoModeler. Utilizing the software’s Idealize functionality, it’s possible to remove lens distortions during processing. This is said to make it far easier to create crisp game and animation backgrounds that better match their source material.

The program also features an automatic point cloud-generating algorithm which yields models with highly accurate textures that can be exported in the widely used CAD format. That said, the program is not strictly built for photogrammetry. As a result, the software lacks the automated photo-to-solid workflow of dedicated competitors.

Car photogrammetry in RealityCapture

For those seeking programs designed for the digitization of stunning scenery, Unity markets SpeedTree. Using everything from 3D art tools to procedural generation algorithms, the software allows plant life to be faithfully recreated. It’s therefore no surprise that it has been picked up by gaming stalwarts like Bungie and Ubisoft.

Likewise, Agisoft offers Metashape, a 3D model-editing software capable of processing elaborate image data, captured both close up and at a distance. While it’s more often used in research, surveying, and defense than video game development, the program’s auto-calibration feature and multi-camera support do make it an attractive option for those digitizing complex objects or creating large 3D environments.

However, there are a few drawbacks to using current photogrammetry packages. Many are marketed in tiers, meaning that in some cases, users will find they have to upgrade to access certain features. In others, programs may be missing functionalities altogether. This raises the bar to entry for new adopters, as it places the emphasis on them to know which supplemental packages they’ll need for a given project.

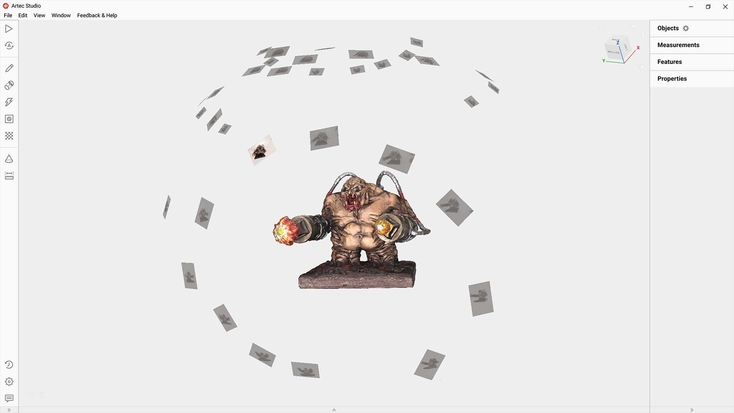

Photogrammetry of a mancubus, a monster featured in the classic Doom video game series

If you’re looking for a broader, more accessible software, it’s also worth checking out Artec Studio. Though it’s better known for the processing of data captured by 3D scanners, the latest version introduces AI Photogrammetry. As mentioned earlier, this cutting-edge feature democratizes and broadens the appeal of 3D data capture.

Harnessing the power of AI, the technology allows users to create 3D models that are reasonably geometrically accurate and exceptionally realistic with any photos or video. AI Photogrammetry even captures objects with complex, translucent, shiny, and featureless surfaces. This makes resulting models perfect for the video game industry, where appearance is everything.

Key point

Software is vital to 3D world-building, and program choice often determines the way captured images can be collated and presented in-game.

When it comes to optimizing the quality of these models, the program’s Autopilot functionality makes it easier than ever for newcomers, by offering to pick the most effective data processing algorithm on their behalf based on a few checkbox inputs.

Additionally, with Artec Studio photo-texturing, users can improve model color clarity by applying textures to 3D scans from high-resolution photographs. Technology-agnostic photogrammetry makes it possible to capture large objects and landscapes as well as use drone-captured imagery, opening the door to animation at scale.

3D scanning as an alternative

While photogrammetry has proven a capable video game modeling tool, it does have its limitations. The accuracy of resulting 3D assets is dependent on the resolution of the cameras used to photograph the target object. Changeable weather, plus calibration, angle, and redundancy issues, can all lead to the creation of undetailed models. What’s more, capturing and combining overlapping images is naturally time-consuming. These issues raise the question: is there a more efficient 3D modeling method?

World War 3: Late-stage character development following Artec Leo & Space Spider scanning, Glock 17 pistol scanned with Artec Space Spider, Face scans made with Artec Leo

Those seeking to accelerate the process of creating 3D models for video games might want to consider adopting 3D scanning. Featuring impressive scan speeds of up to 44 frames per second, cutting-edge devices like the Artec Leo offer photogrammetry users a potential means of accelerating their workflow.

In the past, studio The Farm 51 has achieved just this. By pairing the Leo with another 3D scanner, the Artec Space Spider, the developer has been able to create incredibly realistic World War 3 video game models. In fact, switching from the photogrammetry approach used to develop its previous titles, allowed the firm to generate each character, vehicle, and weapon model for its online shooter in just a few hours.

World War 3 gameplay and an early-stage character development scan being reviewed with Artec Leo

The popular Xbox video game franchise Hellblade also features full-body 3D models created via Artec 3D scanning. Developed with the help of motion capture technology at Microsoft-owned studio Ninja Theory, the game features a jaw-dropping in-game replica of lead actor Melina Juergens. Thanks to the Artec Eva’s impressive flexibility and accuracy, the developer was not only able to make the character appear lifelike but to design them with skin and muscles that moved much like a real person’s.

Nowadays, scale is no obstacle to 3D modeling either. With Artec Micro II, you can create digital versions of tiny objects, capturing intricate details with a resolution of up to 40 microns, before using them to populate in-game worlds. On the other end of the spectrum, the long-range Artec Ray II allows you to 3D scan massive objects, even an entire rescue helicopter, should you need to. With the technology continuing to find new large-scale modeling applications, who’s to say it can’t be used to transport such vehicles into the virtual realm?

A 3D model of an MD-902 and the helicopter being 3D scanned with Artec Leo

Take the team at Creative Mesh. Using Leo & Ray II together, the developer has created incredibly lifelike DLC for Farming Simulator, a favorite among PC gamers. With the developer having also captured everything from vehicles like planes to wider areas, the sky really does appear to be the limit for 3D data capture in this space.

Combining photogrammetry with 3D scanning

Another option is to use photogrammetry alongside 3D scanning. This could mean capturing a wider scene with photogrammetry, then digitizing individual items within this area using a professional 3D scanner. As photogrammetry works with virtually any photoset, you can also deploy drones, capture real-world scenes, and recreate them in video game form on a truly massive scale.

When merging photogrammetry and 3D scanning datasets, Artec Studio is head and shoulders above the rest. Not only does it allow for the combination of entirely different mesh data, it features photo-texturing, so you can apply textures to models by hand.

When you factor in AI Photogrammetry, Artec Studio really does offer the best of both worlds: 3D scanning, photogrammetry, or both, if your application demands it.

Conclusion

In light of the practical applications outlined above, it’s clear that both photogrammetry and 3D scanning are helping push graphical boundaries in the gaming industry. As each blockbuster built using scanning has relied on slightly different 3D platforms, there’s not currently an all-purpose solution that can repeatedly be rolled out to create models for any given video game – although Artec Studio is closing in on this title!

Instead, studios are faced with a choice of hardware and software packages, with some designed specifically for game development, and others built for wider applications. When deciding between these, developers need to consider factors such as the type of environments they want to recreate in-game, as well as the graphical fidelity they want objects in their virtual world to have.

Fortunately for such creators, the rising adoption of photogrammetry and 3D scanning in gaming has led to the launch of products that meet varying needs. The technologies’ proven ability to faithfully reproduce objects of all shapes and sizes, ready for uploading, tweaking, and integrating into virtual worlds makes them attractive for accelerating the development of new, cutting-edge games. With AI also threatening to automate the 3D modeling process, features like AI Photogrammetry will only add to developers’ toolboxes.

Considering all of the above, it’s an exciting time for photogrammetry and 3D scanning in the CGI and video game industries – it will be fascinating to see how the technologies continue driving innovation in these applications and more.

Read this next

More from

the Learning center

Photogrammetry is the process of taking reliable measurements from images. It has been with us in some form for centuries and has helped shape our understanding of things like the Earth’s surface. Today, it plays a vital role in many industries. So here is a primer to give you a general understanding of what it is and how it works.

Photogrammetry software has advanced rapidly, becoming faster, more user-friendly, and capable of producing highly realistic results. With lower costs and improved technology, the field is now more accessible than ever before, encouraging broader adoption and driving the development of more intuitive tools. However, choosing the right program involves considering several factors, from file formats to pricing to the learning curve. While the diversity of options can feel overwhelming at first, understanding the basics lays the groundwork for creating true-to-life digital twins of everything from tiny objects to large terrain areas. Our overview explores the current landscape, offering key insights to help you navigate your options with confidence.

The process of creating a 3D model starts with choosing the right tool for the job, and this choice primarily revolves around what you need the 3D model for – in other words, how you’re planning to use it. Some of the most popular tools include 3D scanning and photogrammetry, and this article is here to guide you as to which one of them to pick for a specific project, as well as when they can be combined.